Overview

This guide walks you through instrumenting your Python-based LangChain application and streaming telemetry data to SigNoz Cloud using OpenTelemetry. By the end of this setup, you'll be able to monitor AI-specific operations such as agent reasoning steps, tool invocations, API calls, and intermediate chain executions within LangChain, with detailed spans capturing request durations, tool inputs and outputs, model responses, and metadata throughout the agent’s decision-making process.

Instrumenting your agent workflows with telemetry enables full observability across the reasoning and action pipeline. This is especially valuable when building production-grade AI applications, where insight into agent behavior, latency bottlenecks, tool call performance, and response accuracy is essential. With SigNoz, you can trace each user request end-to-end—from the initial prompt through every intermediate reasoning step, tool execution, and final answer—and continuously improve performance, reliability, and user experience.

To get started, check out our example LangChain trip planner agent with OpenTelemetry-based monitoring (via OpenInference). View the full repository here.

You can also check out our LangChain SigNoz MCP agent here.

Prerequisites

- A Python application using Python 3.8+

- LangChain integrated into your app

- Basic understanding of AI Agents and tool calling workflow

- A SigNoz Cloud account with an active ingestion key

pipinstalled for managing Python packages- Internet access to send telemetry data to SigNoz Cloud

- (Optional but recommended) A Python virtual environment to isolate dependencies

Instrument your LangChain Python application

To capture detailed telemetry from LangChain without modifying your core application logic, we use OpenInference, a community-driven standard that provides pre-built instrumentation for popular AI frameworks like LangChain, built on top of OpenTelemetry. This allows you to trace your LangChain application with minimal configuration.

Check out detailed instructions on how to set up OpenInference instrumentation in your LangChain application over here.

Step 1: Install OpenInference and OpenTelemetry related packages

pip install openinference-instrumentation-langchain \

opentelemetry-exporter-otlp \

opentelemetry-sdk

Step 2: Import the necessary modules in your Python application

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

from opentelemetry.sdk.resources import Resource

from openinference.instrumentation.langchain import LangChainInstrumentor

Step 3: Set up the OpenTelemetry Tracer Provider to send traces directly to SigNoz Cloud

resource = Resource.create({"service.name": "<service_name>"})

provider = TracerProvider(resource=resource)

span_exporter = OTLPSpanExporter(

endpoint="https://ingest.<region>.signoz.cloud:443/v1/traces",

headers={"signoz-ingestion-key": "<your-ingestion-key>"},

)

provider.add_span_processor(BatchSpanProcessor(span_exporter))

<service_name>is the name of your service- Set the

<region>to match your SigNoz Cloud region - Replace

<your-ingestion-key>with your SigNoz ingestion key

Step 4: Instrument LangChain using OpenInference

Use the LangChainInstrumentor from OpenInference to automatically trace LangChain operations with your OpenTelemetry setup:

LangChainInstrumentor().instrument()

📌 Important: Place this code at the start of your application logic — before any LangChain functions are called or used — to ensure telemetry is correctly captured.

Your LangChain commands should now automatically emit traces, spans, and attributes.

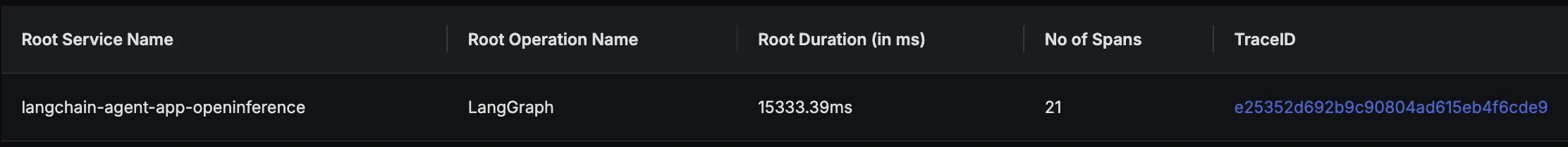

Finally, you should be able to view this data in Signoz Cloud under the traces tab:

When you click on a trace ID in SigNoz, you'll see a detailed view of the trace, including all associated spans, along with their events and attributes:

Instrumenting LangChain Applications in JavaScript

You can instrument your LangChain applications in JavaScript using the OpenInference LangChain Instrumentor package.

For detailed guidance on instrumenting JavaScript applications with OpenTelemetry and connecting them to SigNoz, see the SigNoz OpenTelemetry JavaScript instrumentation docs.