This page shows how you can use distributed tracing to retrieve detailed telemetry data and see how your applications are performing. This section explains:

- How to visualize aggregate metrics from traces

- How to filter your spans

- How to inspect a span

Prerequisites

- This section assumes that your application is already instrumented. For details about how you can instrument your application, see the Instrument Your Application section.

- This section assumes that you are familiar with the basics of monitoring applications.

Open the Traces Section

From the sidebar, select Traces:

Visualize Aggregate Metrics from Traces

SigNoz allows you to create aggregates of your filtered traces with various aggregate functions. Refer to query builder aggregation docs

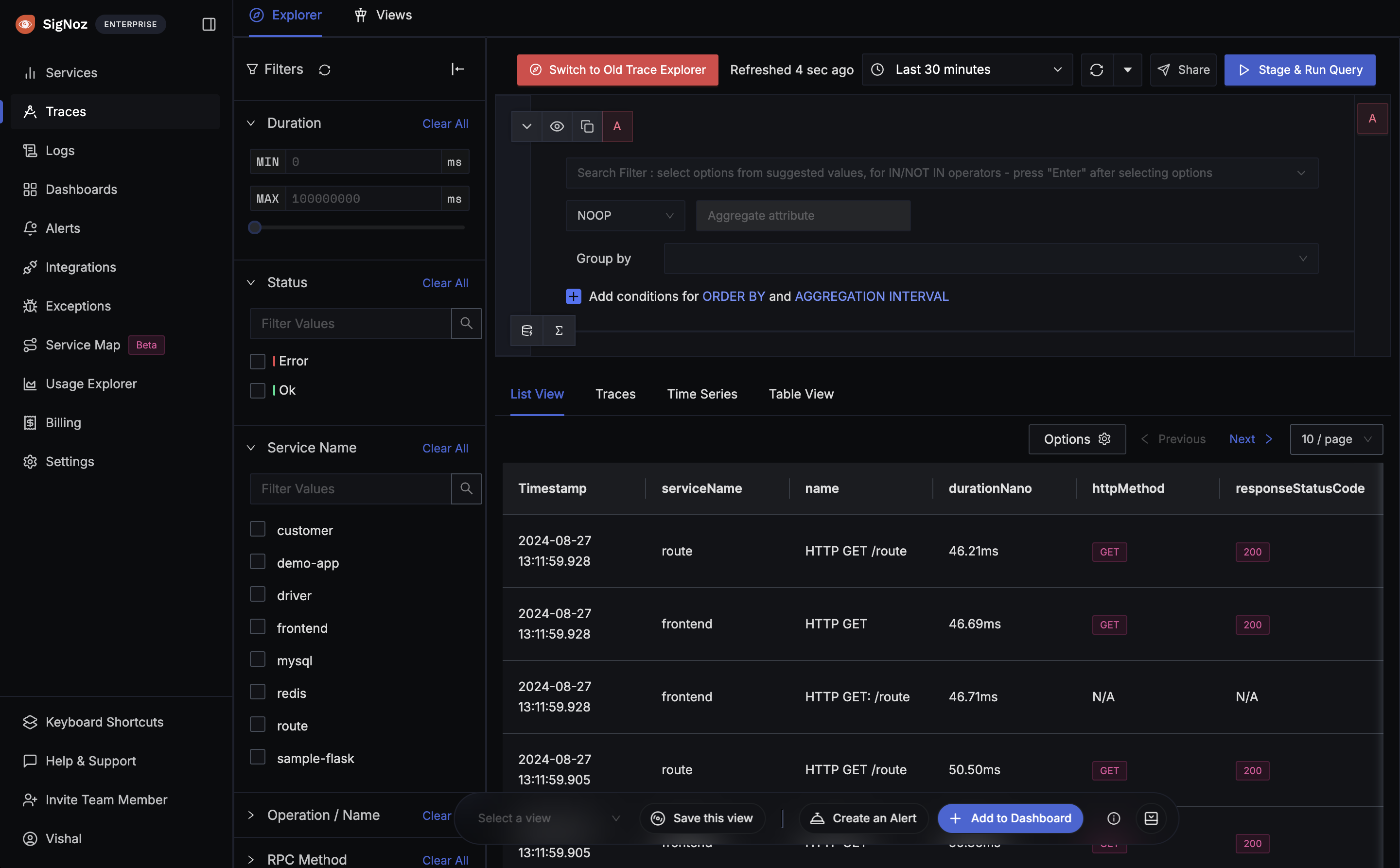

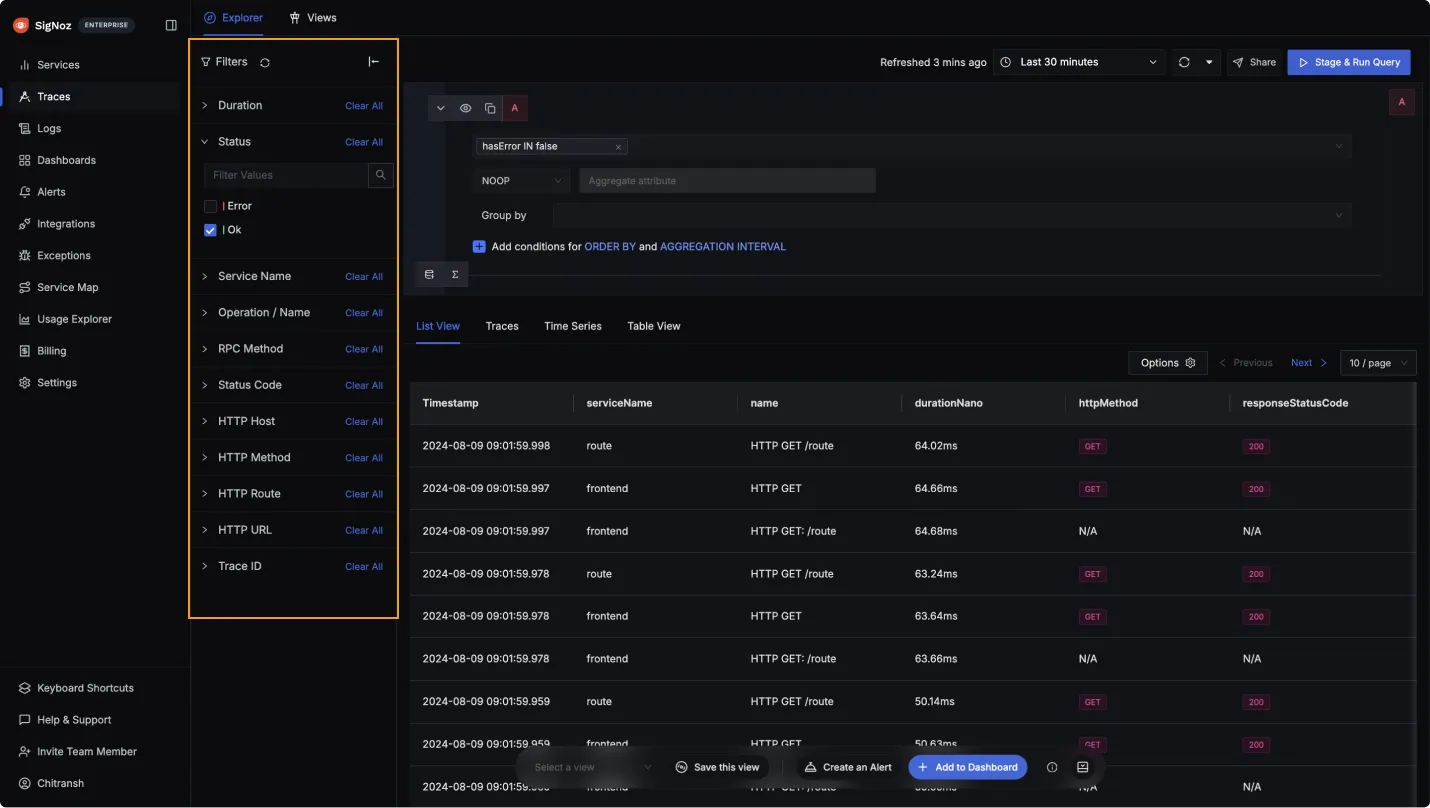

Filter Spans by Tags/Attributes

The Traces Explorer in SigNoz provides a feature for quick filtering of spans using various parameters. This functionality allows users to quickly search their specific spans, making it easier to analyze and debug applications.

There are two ways in which you can filter traces by tags:

- You can select a filter from the Quick Filter. More details on available quick filters are here

- You can use the query builder. To access the query builder, select the Search Filter input. Then, use the dropdown list to select a key from the list of your attributes, select an operator and enter a value. When you’ve finished, select the Stage and Run Query button. Refer to this doc for more details on Query Builder.

Inspect a Span

To further troubleshoot your application, you can select a span from the list to view its details. For details, see the Span Details page.

Missing Spans

A "missing span" occurs when a span's parent span is not found in the collected trace.

Common Causes

Tail Sampling: If you have configured tail sampling in your OpenTelemetry Collector pipeline, the sampler randomly selects a subset of spans to keep and drops the rest. This means some spans from a trace may be retained while others are discarded, resulting in incomplete traces with missing spans.

Network Issues: Spans may be dropped in transit due to network issues between your application and the OpenTelemetry Collector, or between the Collector and SigNoz. This can happen due to transient connectivity failures, timeouts, or packet loss.

Misconfigured Services: If a service in your request chain is not exporting its spans to SigNoz, the spans it generates will be missing from the trace. Since its span ID is used as the parent span ID by downstream services, those downstream spans will appear as missing spans.

Click to read more details

Every span has two key identifiers: a Span ID (its own unique ID) and a Parent Span ID (the ID of the span that called it). When a service makes a call to a downstream service, it propagates context via the traceparent header. This header carries the current span's Span ID, which the downstream service uses as the Parent Span ID of the new span it creates. A span is flagged as "missing" when no span in the collected trace matches its Parent Span ID — the parent span exists but was never collected.

Learn more about how traces work in OpenTelemetry.

The root span is the first span in a trace — it does not have a Parent Span ID because no upstream service initiated it.

For example, consider three services — Frontend, Auth, and Database:

Frontendcreates the root span (Span ID:abc1, Parent ID: none).FrontendcallsAuth, passing its Span IDabc1in thetraceparentheader.Authcreates a new span (Span ID:def2, Parent ID:abc1).AuthcallsDatabase, passing its Span IDdef2.Databasecreates a new span (Span ID:ghi3, Parent ID:def2).

If Auth fails to export its span (Span ID: def2), the Database span will reference a Parent Span ID (def2) that does not exist in the collected trace — resulting in a missing span.

Span Gaps

Sometimes it's possible that there are gaps between consequent spans. This happens when some process/code are not traced. For Example, by default OpenTelemetry auto instrumentation libraries do not trace the custom functions/methods. This can be fixed by adding manual instrumentation to the code.

Understanding Trace Duration Discrepancies

When analyzing latency metrics in the traces list, you may notice that the duration shown differs from what appears in the trace details. This is because:

The duration column in the traces list shows the duration of the root span, not the entire trace duration.

This can lead to apparent discrepancies for several reasons:

- The root span may complete before all child spans finish - The root operation might return a response while background tasks continue processing

- Asynchronous operations - Child spans can run longer than the root span when operations are performed asynchronously

- Total trace duration - The trace details view shows the complete duration including all spans, which may be longer than just the root span

Example Scenario

You might see a trace listed as 38.9 seconds in the traces page, but when you open the trace details, it shows a total duration of 1 minute. This happens when:

- The root span (the initial request handler) completed in 38.9 seconds

- Other spans in the trace (such as background jobs, async tasks, or queued operations) continued running

- The total time from the first span start to the last span end was 1 minute

This behavior is expected and reflects how distributed systems actually work. If you need to measure specific service-to-service latencies rather than root span duration, see the writing ClickHouse queries documentation for guidance on using kind_string = 'Client' filters.

Get Help

If you need help with the steps in this topic, please reach out to us on SigNoz Community Slack.

If you are a SigNoz Cloud user, please use in product chat support located at the bottom right corner of your SigNoz instance or contact us at cloud-support@signoz.io.